- AI governance decisions can have significant impact on both the workforce and the clients of an organization, making it crucial for executive leadership to consider the reliability and safety limitations of artificial intelligence models.

- With more than 1000 new generative AI tools launching last month alone, navigating the expanding AI landscape presents a challenge for businesses and society as a whole.

- A market consolidation is imminent in the future, mainly because the most powerful tools require immense resources to develop. But later, we may see widespread democratization, as AI models get easier to train locally.

- You should familiarize yourself with available AI solutions and their most appropriate applications to help your business to some measurable ROI.

An Embarrassment of Riches

The world is entering a new era of artificial intelligence (AI), bringing both limitless possibilities and unprecedented risks. Automation is causing a reinvention of jobs and economic disruption, presenting challenges for executive leadership and serious impact on the workforce.

CEOs and upper management need to establish sufficient AI governance to determine the best AI options for their organization.

In our last article, we explored integrating AI into existing processes, and in this article, we provide context for different AI models. By analyzing the advantages and disadvantages of each, you can make informed decisions to leverage AI in a way that suits your needs.

Let's dive in!

Exploding Growth

To fully grasp how artificial intelligence can help you most effectively – or drive you out of business – it is required to have a good understanding of its background.

Data Science and Machine Learning has seen tremendous growth in the past years. The revolutionary approach of digital neural networks that simulate the learning process of our own brains has led to today's boom, with natural language processing (NLP) being the most dynamically growing area of AI.

Natural Language Processing in general has the advantage of interacting with your computer in a conversational way, as the AI now understands the way humans talk. From now on, consider the main user interface of every digital tool to be your native tongue. Just like in science fiction, you’re free to lay back in your chair, and have the bot do your bidding. And your workforce can do the same.

OpenAI's ChatGPT is one of the most famous examples of NLP, and it has become the fastest-growing app of all time.

GPT stands for Generative Pre-trained Transformer, which is a type of Large Language Models (LLM) with a direct aim to output information based on user prompts.

Since the release of ChatGPT version 3 in November 2022, there have been more generative AI product launches than in the previous three years combined, with OpenAI leading the general-use LLM market.

The newest model, GPT-4 is already multimodal, meaning that it was trained using various media such as images and videos, not just text. GPT-4 is also multiple times more powerful than GPT-3, having trained on at least a trillion parameters compared to GPT-3's 175 billion parameters… which undoubtedly changes the game.

How to choose between tools?

Although OpenAI is dominating the market, it's important to explore all available options, as the right combination of models and use cases can vary depending on your goals.

To help make sense of the myriad of generative AI options, we can analyze them along two axes:

- large models for general use vs. smaller, specialized solutions

- cloud-based online platforms vs. algorithms that run locally on your own systems

Reliable and safe

Specialized, locally integrated AI tools offer benefits such as privacy, domain knowledge, transparency, and scalability. However, they also come with limited versatility, higher costs, and the need for maintenance on your part. Self-hosting an AI model on your own systems is currently not as trivial as it will be in the future, but it can be crucial for processing delicate information. This type of setup allows more security when dealing with protected client details, legal documents, or other sensitive matters.

But why put this kind of data into some kind of AI, at all? The benefits of even a small-scale model cannot be overstated, as they can double the productivity of your everyday processes.

Online providers may also change their terms and policies unilaterally, as seen with the recent backlash against Midjourney’s new moderation methods. In contrast, locally run algorithms that were developed with your unique needs in mind can be fully integrated into your company IT ecosystem, and will forever adhere to your policies alone.

Large and capable

Publicly available Large Language Models and generative AI offer benefits such as versatility and cost-effectiveness. They are highly capable and can seemingly do everything: generate, summarize or classify text, translate between languages, answer questions, analyze sentiment, create and moderate content, and so on.

You or some of your colleagues might have already asked an AI to perform such tasks to speed up your daily processes.

With plugins, some models can even write and format code, recognize speech, output video and images, and browse the internet in real-time. OpenAI’s website reads:

“Though not a perfect analogy, plugins can be “eyes and ears” for language models, giving them access to information that is too recent, too personal, or too specific to be included in the training data. In response to a user’s explicit request, plugins can also enable language models to perform safe, constrained actions on their behalf, increasing the usefulness of the system overall.”

LLMs are perfect for assisting in everyday tasks, and can boost your productivity and creativity to an unprecedented level.

Because of this, knowing how to prompt the different models and how to give instructions that effectively convey your needs will be the single most important soft skill in the near future.

Current limitations

Although Large Language Models have gained massive popularity in recent months, they also come with limitations that should not be overlooked.

We wanted to hone in on three key issues: reliability, safety and intelligence.

Reliability

As we all know, chatbots sometimes give blatantly wrong answers and when pressed for an explanation, they often double down on them, or straight away lie instead of correcting themselves.

If you wondered why these “hallucinations” happen, we got you covered. To understand, we have to go a little deeper into how the models are trained. Here’s an (over)simplified summary:

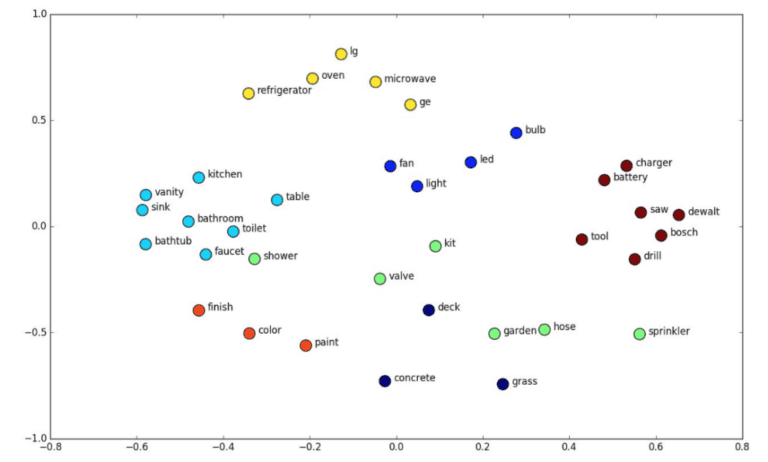

1. The first step in training a model is collecting a lot of sources, so called “tokens”, in order to build up a training dataset.

2. Then the data is compressed and encoded into numbers, known as embeddings.

3. The embeddings are then mapped onto a multidimensional plane, also known as a latent space, where the distance between words determine their degree of similarity:

4. The lion’s share of the training happens in this latent space, allowing for a deeper understanding of relationships between words.

5. Finally, the model leverages this space to process user queries, identifying the most suitable outputs based on your inputs (prompts). To simplify it even more, it’s predicting what you want to hear based on statistics, just like autocorrect – but with a much better understanding of context.

6. The developers also often give feedback to the AI, rewarding desired behavior, and restricting harmful answers. This is called reinforcement learning from human feedback (RLHF).

7. These training steps can be repeated and improved upon to refine the accuracy and effectiveness of the GPT over time.

The reliability issue stems from the fact that larger models have been trained on a vast dataset containing false and unnecessary information that has been incorporated into their latent space. However, developing honesty is not trivial. Achieving a high level of reliability and self-reflection would require human-like reasoning capabilities that these models lack. Until they can provide more accurate answers, strict human supervision is needed in high stakes fields such as law, medicine, or enterprise management.

Currently, highly specialized fields may require the development of unique models to be integrated into their practices, as domain knowledge and accuracy are a must in their cases.

Compliance

Secondly, safety is another significant issue with LLMs. These online language transformers process and send every input automatically to their respective developers, posing a risk to privacy and data protection. For example, even if you opt out from having your data used in training, every prompt you enter into ChatGPT will be sent to OpenAI's and Microsoft's servers, vetted, and then stored temporarily. As such, businesses concerned about the extra mile in confidentiality may consider running AI tools locally, and not streaming any data about their usage.

Intelligence

Lastly, despite the recent hype, LLMs are not as intelligent as they seem. While GPT-4 has been praised for its "true intelligence", such claims are disputed. Stanford University's Computer Science department argues that while LLMs have improved language transformation algorithms in general, they still lack the proper reasoning capabilities.

So apparently, we are yet to see the advent of Artificial Superintelligence, and must work around LLMs’ current disadvantages such as their unreliability and the impossibility of their full integration.

Future market consolidation

As we’ve discussed, the AI landscape is evolving at a rapid pace, with over 1000 new AI tools released just last month alone. The options are overwhelming, and we're only at the beginning of the hype curve.

Recent advancements in generative AI have given rise to some incredible applications, including mind-reading, photorealistic images, and even robot dogs that you can talk to and command around.

While ChatGPT is attracting a lot of attention, the AI landscape remains highly competitive, providing targeted solutions that are tailored to specific domains.

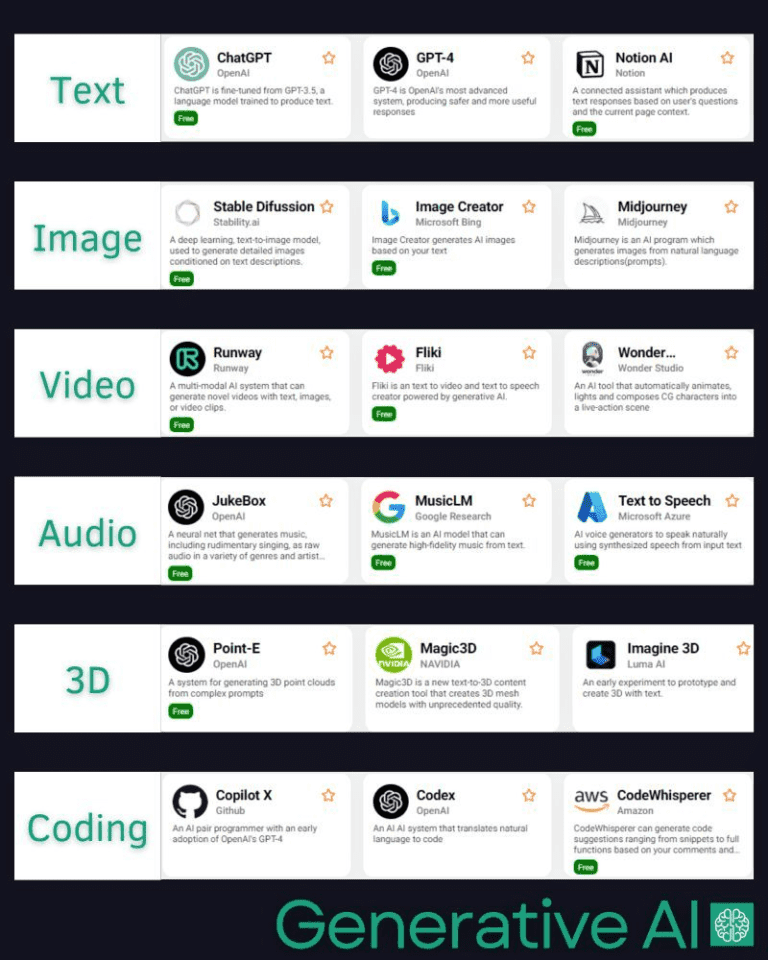

Source: Generative AI blog

As the number of AI tools continues to increase, the market may become more saturated with small-impact projects than ever before. Meanwhile, traditional apps and platforms are being driven out of business by AI-augmented alternatives, or by the sheer capabilities of advanced LLM chatbots alone.

Thus, a market consolidation is inevitable. On one hand, the largest AI providers have the most resources to develop neural networks that learn faster and from more information. With the eventual rise of Artificial General Intelligence, most development work will be done by the AGI itself.

On the other hand, we can also expect further democratization of AI technologies. An internal Google document predicts that the future of AI may be dominated by free & open-source options, as the tech becomes more and more available. While OpenAI is currently at the top of the game, they’re quickly falling behind with new releases. Meta, Google, Huggingface and Stability AI all came forward with multiple open-source updates on a weekly basis.

Online tools and browser plugins are already using LLM APIs for everything, so it would be a safe bet to say that your old-school internet browsing experience will transform into something that is increasingly customizable and AI-based.

Over the next two years, besides a growing market, we can expect AI to become gradually more reliable and easier to integrate and use on your own devices, coupled with the rise of open-source availability.

For Takeaway

It’s important to remember that you can achieve the best results by utilizing all available AI options in conjunction with each other. By doing so, you gain greater flexibility and customization to meet the specific needs and requirements of different users and use cases within your organization.

In our next article, we'll feature an insider story from one of our best Data Scientists about the development background of a big NLP project. Stay tuned for the latest advancements in AI, and ahead of the curve in the face of the ongoing industrial revolution!