At a recent round table discussion in Hungary, experts from the data industry shared their predictions for the modern data stack in 2023.

Anticipating future events during times of uncertainty can be quite a daunting task. However, accurately forecasting them with a comprehensive understanding of all possibilities is not a one-man show.

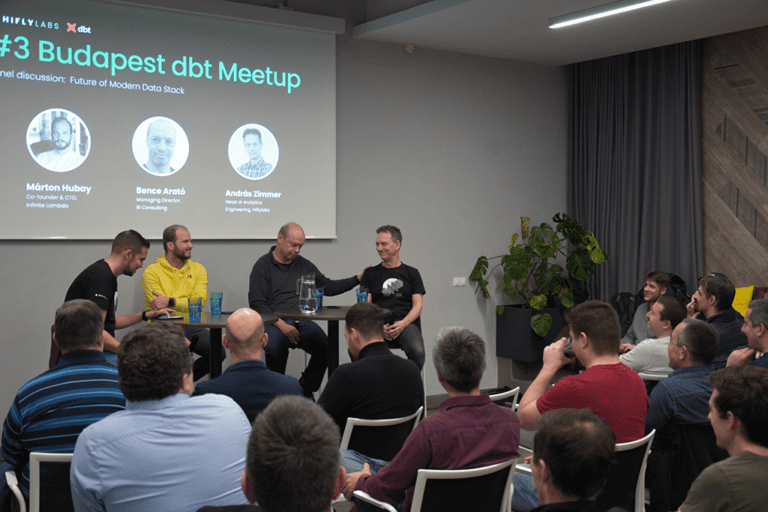

A diverse range of perspectives is needed to eliminate any false positives from the equation. To gain insights into the year ahead of us, we joined forces with some of the brightest minds in our local data community at the third Budapest dbt Meetup, hosted by Hiflylabs.

Let's dive headfirst into the future of the Modern Data Stack with a lively debate with András Zimmer, the Head of Analytics Engineering at Hiflylabs, Bence Arató, the Co-founder and Managing Director of BI Consulting, and Márton Hubay, the Co-founder and CTO of Infinite Lambda!

#1 Prediction: The GitHub Copilot or other similar AI-based code generators will be extensively used by the majority of analytics engineers within a year.

A.B.: First, I must remember if I answered the poll question before or after ChatGPT launched. Back in the day, I had some reservations about it. And, as you might know, there are also no-code, low-code, or zero-code methods. But if we formulate the question like “Will it be widespread among data engineers who typically write code, for example in a dbt environment?”. I believe this to be the case now. I saw some GitHub statistics saying that in their repositories half of the codes are generated with Copilot support. Honestly, I didn’t expect usage to be that extensive in such a short time.

H.M.: I have some practical experience with these technologies. We started using it, testing for compatibility and accuracy. We can already see that it works very well with Python, and not that well with Infrastructure as Code.

So, directly answering the question as it mentioned Analytics Engineering, I think it couldn’t work for now as the AI technologies don’t use the latest sources. I think, at this moment, they only processed the public repositories until 2021. But, if we check dbt analytics engineering repos, we can see exponential growth in their numbers. As their volume probably doubled in 2022. Thus, we can expect an increase in accuracy shortly.

I must admit, I see the development there. And as I said, some technologies are already in a usable state.

But let me add a few thoughts here. We are talking about codes, but I see two other connection points in the data world. Firstly, how the vendors will use it, and what Snowflake and dbt will add to these. I would see a development in the Governance topic, that is where we could find real advantages. Secondly, it would be interesting to see how the appearance of AI will rewrite data modeling. I can imagine a future where it is optimized for the machine itself and only the top layer for the user. But of course, this won’t happen soon.

Z.A.: There is no doubt in me that we will generate a lot of code with AI or automated tools within a reasonable time. But how likely is it to happen in 2023? - I’m not too confident about that, even with or without chatGPT. I think this will be a slower process. The data world is conservative. I understand that the dbt world within the data world is progressive, but still, in the data world things usually happen slower.

Another important aspect is that I currently see that specific types of codes or tasks can be sufficiently supported by these, while others are not so much. Our tasks' significant part is understanding what to do and translating how to do it with data somehow. I see that in the end, we will write some code, one way or another. But still, this first part I mentioned cannot be grasped by AI at this moment. I do not state that it will never be able to, but from my perspective, it’s just not there yet.

Back in the day in data warehouses, we did something called "straight raw to stage loaders". When you receive the source data and load it directly into the tables. That was a big chunk of not-too-sophisticated work. And you had to do a lot of these. I think these types of codes will be replaced very shortly with these generator tools.

#2 Prediction: The year 2023 will be all about cloud and SaaS cost optimization.

H.M.: There are a lot of tools and industries specialized in the optimization of Snowflake costs. So, it’s surely possible. But I think the approach is not cutting costs directly. Rather than trying to get most of it what they already have. Not doing X, but 2-3-4 times X. I don’t see why it wouldn’t be possible to optimize. Typically, all data warehouses have to face this. Mostly, in the lack of Data Governance, things can over-spawn. The same thing is true for clouds, from the Cloud Mentoring aspect.

A.B.: For me, as a true data-loving man, the idea of spending less on data goes completely against my nature.😊

But to take this question seriously, there will be more data and more ways of processing. I don’t think we should reduce or optimize costs in itself, but the ROI is a different question. I agree with what Márton said. It’s not a goal to save money on the bills. They can even be higher if there is business value in return. The more data you process, the more you help your business. It was a memorable moment last year when Snowflake announced that they would make the processing more efficient. The press claimed they were digging their own grave since that way they would reduce their revenue. But if you give a higher performance for the same amount of money, the customer will spend one and a half times more. Anyways, in optimization, there are always a lot of opportunities.

Z.A.: I think it’s not only possible to optimize but it’s also necessary. Nonetheless, I agree that at the end of the day, people won’t spend less, but approximately the same or even a bit more. However, they will do way more things with the same investment. It’s an interesting question, how much we can measure the benefits of what is happening here. We talk about the rate of return and ROI. But many times, what data engineers do cannot necessarily be traced back directly to the created business value. I’m not saying there is no relation, but sometimes it’s not that obvious. So, it’s hard to say what to turn off, or turn down, or optimize.

#3 Prediction: A significant consolidation will start among the countless tools of Modern Data Stack; the consent of the “best-in-class” tools will strengthen.

A.B.: The Modern Data Stack follows the curve of the classic Gartner Hype Cycle. We run it up and hype it. Then, it plummets in the Trough of Disillusionment, and in the end, it stays on the Plateau of Productivity. It can be debated whether we are in the direction of the Plateau of Productivity yet or we are just going to fall into the Trough of Disillusionment.

Now, the issues started to arise with these dozens of different products. Last year there was a lot of debate around Bundling or Unbundling as a result of this redundancy.

But to answer the question concretely, the previous years of "plenty" was the direct consequence of the state of the financial market. There are still new product appearances of course. I often jump into my "market analyst shoes" to monitor product launches and announcements and there is at least one new promising product each week.

I would consider this the outcome of the many product categories for which there are still no really good and proper solutions, and people believe they will do better.

There was a 2023 Data Kickoff meetup last week. We were talking about how the frontends question is not solved yet. Should it be a BI tool, Dashboard, notebook, or maybe a mashup of these; to be clicky or not to be clicky? So, new vendors constantly appear because there is no standard solution that could be the “real good” frontend layer in a modern data platform.

On the other hand, it’s interesting to see that dbt doesn’t even have a real competitor in the segment where it exists. Meanwhile, there are 5-6 competing pieces of metadata management software. I also see that there are no new arrivals in these flooded topics. If there are five concurrent players already it’s hard to find either technical or business verification to create the sixth one. How will it be different, and to whom will you sell it? These are always the two most crucial questions.

To summarize, where I see these missing solutions is the front end. You might hear “dashboard is dead”, but still, there isn’t a better solution. The other topic is data governance. There are the big vendors and the tiny start-ups. I miss the middle layer here.

Lastly, I would like to turn back to bring forward an idea regarding the first topic. Some AI solutions that write documentation. Every developer likes to write code, but documentation is a different story. I want to see some automated AI solutions where the sources, metadata, and relationships are collected and handled appropriately. Currently, there are at least 15 different tools for this usage, but none are truly effective.

H.M.: I see that everyone goes forward on the maturity curve. Some ahead, some behind. As Márton said, there are gaps everywhere. However, in a few years, they would be able to fill these.

Money was abundant in previous years. We can say that slowly, the stack requires the next investment round this or the next year. So, if someone cannot bring the numbers they will be ousted from the stack. Therefore, I think we will see some dropouts.

To answer the last part of the question, I think the growth won’t stop in 2023 but its rate will slow down. People always find money to fill the gaps, but it won’t be as it was in the past two years. A report with Andreessen Horowitz also shows a peak in 2021-2022 but also points out that the end of 2022 was a nosedive. I think we will experience some faltering, but not a total shutdown. So, we shouldn't worry about the industry itself.

You also asked what the gaps were or where we could expect some buzzing in the stack. I expect real-time technologies to be among them. I can’t see alternatives here or even tools. There may be a few, but they are immature.

Z.A.: If we are looking for the “best-in-class” tools, we need to consider what is reasonable and good for a given customer’s given context. In my opinion, besides dbt, there aren’t any tools I would suggest on the spot for a case with a given purpose.

Am I expecting consolidation? If by that you mean that some products will alloy, and fewer participants will be on the market overall, then I don’t expect it this year. Quite the opposite, I think less successful products will also survive this year. I can imagine more of these consolidations in about 2-3 years.

Will there be a lot of new products? I don’t think there will be as many as there used to be. My gut tells me that we will see one or two surprisingly successful products this year that we haven’t seen before. I also see opportunities in the directions that the others previously mentioned. Especially regarding real-time, as customers want feedback to be faster and faster. These desires are not satisfied as dbt mostly follows batch-based thinking.

I can see some buzzing around Databricks as well, but that’s a different story...

_______

These were the discussed statements and reactions. But fortunately, the dispute didn’t finish at this point, as the audience had further topic suggestions. The questions were mainly related to the sustainability of these new technologies in the attraction of the Modern Data Stack. It turned out that the danger of being outdated can be real in the enterprise environment, especially if we are talking about APIs. However, whenever the core projects are open source, it gives an extra safety net and a significant boost from the community. The other perspective was that picking technologies with other cloud alternatives is always a safer choice.

There were a few questions that touched upon the business side such as what the expected sales speeches and data project trends could be in 2023. All debaters agreed that this year will be all about optimization and short-term achievements. Mark added an eloquent comment: “This will be the year of the vole”, which means that project applicants first must prove how much they can achieve with small investments in the shortest time possible. We can say the market is impatient. However, with small but visible improvements, both in the numbers and on the user end, we can find plenty of opportunities in the upcoming years.

At the end of the night, the future of the modern data stack seemed slightly different from every perspective. Only time will tell which predictions for the Modern Data Stack in 2023 come true, and we can't wait to draw our conclusions at the close of the year.